scrapy 源码阅读笔记(1)-- Spider

2016-11-25 本文已影响806人

troy_ld

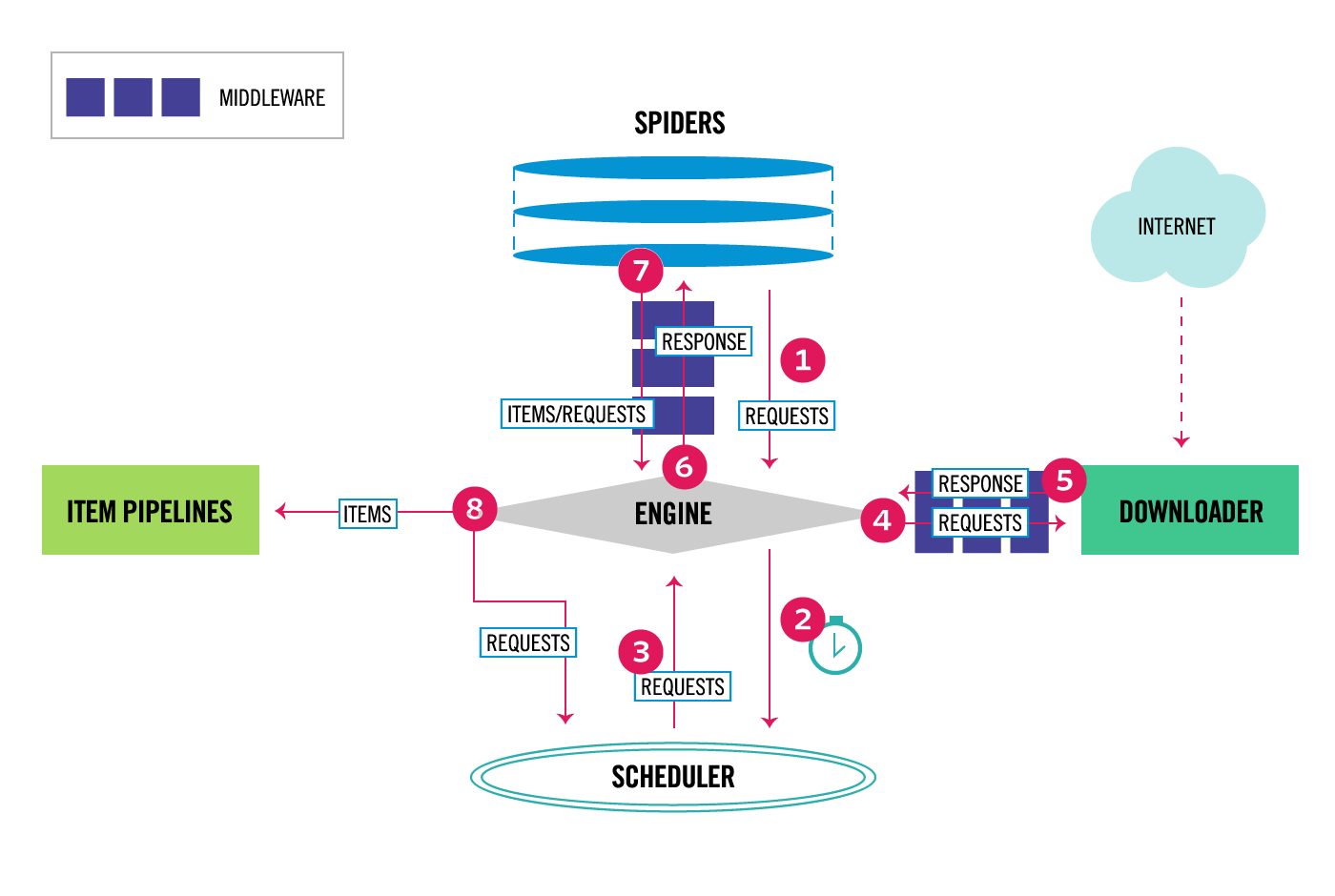

数据流向

关于Spider

在我看来,Spider主要负责Request的生成,和Response的处理(解析)。不过除了这两个功能外,如果想在多场景下合理定制Spider,必须对每一个属性/方法都有所了解(最好阅读源代码)。一下是我的一些总结。(必须是指就流程而言)

| 属性方法 | 功能 | 必须 | 描述 |

|---|---|---|---|

| name | id | 是 | scrapy识别spider的id |

| start_urls | 种子 | 否 | scrapy推荐的爬虫入口 |

| allowd_doamins | 正则 | 否 | 过滤非匹配url |

| custom_settings | 参数 | 否 | 对于每个spider独有的配置参数 |

| crawler | 交互 | 是 | 绑定spider,与engine交互 |

| settings | 配置 | 是 | 导入参数 |

| logger | 日志 | 是 | 直接来自python标准库 |

| from_crawler | 实例化入口 | 是 | scrapy风格的实例化入口 |

| start_requests | 初始化 | 是 | scrapy推荐的初始Request生成器 |

| make_requests_from_url | 否 | 构造Request, dont_filter | |

| parse | 解析 | 是 | Request的callback,返回Item或者Request |

| log | 日志 | 是 | 封装logger |

| close | 信号处理 | 可定制,处理spider_closed信号 |

分析

- parse(response)

作为Request的回掉函数,功能简单,逻辑清晰,不过大概是 开发 最费时的一步,也非常容易把代码写丑。归根结底是 解析字段 和 数据清洗 的复杂。

scrapy推荐的做法是将解析/清洗方案抽象出来,利用Itemloader导入,代码可以异常简洁。我正在计划,将字段定义/解析规则/清晰规则序列化到数据库,每次针对url的特征(例如domain,path),进行选择。

- crawler

了解scrapy如何运作,躲不开河玩意儿。scrapy具有模块化的特征,但在很多模块/插件初始化的时候都通过crawler导入settings;你想在后台调用spider,也需要对crawler有所了解。在我看来,crawler就是用来管理Spider,封装了spider初始化,启动,终止的api。如果足够好奇,仔细看看scrapy.crawler.CrawlerProcess。俺3个月前第一次尝试将scrapy挂到flask上时差点被搞死(关于twisted的一堆报错)。

提示:单独开进程执行。

- from_crawler(crawler, *args, **kwargs)

scrapy 推荐的代码风格,用于实例化某个对象(中间件,模块)

"This is the class method used by Scrapy to create your spiders". crawler 常常出现在对象的初始化,负责提供crawler.settings

- close

从scrapy的风格来看,是一个 异步信号处理器 。更常见的方案是在from_settings 中利用信号(Signal)和指定方法关联。使用它可以做一些有趣的事儿,比如在爬虫终止时给自己发一封邮件;定时统计爬虫的进展。

@classmethod

def from_crawler(cls, crawler, *args, **kwargs):

spider = super(Filter, cls).from_crawler(crawler, *args, **kwargs)

crawler.signals.connect(spider.spider_closed, signal=signals.spider_opened)

crawler.signals.connect(spider.spider_closed, signal=signals.spider_closed)

return spider

- other spider

scrapy还维护了 CrawlSpider, XMLFeedSpider, SitemapSpider. 大致就是简单修改了Respone的处理规则, Request的过滤。相较于不同的Spider设计, 大家或许会对FormRequest感兴趣, 用于提交表单比较方便。

后续跟进

- 核心模块, Scheduler, Engine, Downloader, Item Pipeline, MiddleWare

关于每个核心模块都会记录阅读经验/笔记, 并交流一些重载方案

- 处理javascript, 处理ajax

处理javascript可以用scrapy_splash的方案;虽然说splash看起来很杂糅,但尼玛效率高,并发渲染js。作为对比, phantomjs webserver支持10个并发, 也足够强。但就是不想去造轮子。

- “智能处理”Response

用流行的词儿来说就是 data-driven programming。之前提到 抽象解析方案 就是一个例子。不过对这方面的概念还比较模糊

- 分布式爬虫

scrapy官方也维护了分布式爬虫的版本--frontera--集成了scheduler, strategy, backend api, message bus, 可以与任意下载器匹配使用,也可以使用frontera中的scrapy wrapper,只需更改配置文件就可以稳定运行。

scrapy.spider.Spider

"""

Base class for Scrapy spiders

See documentation in docs/topics/spiders.rst

"""

import logging

import warnings

from scrapy import signals

from scrapy.http import Request

from scrapy.utils.trackref import object_ref

from scrapy.utils.url import url_is_from_spider

from scrapy.utils.deprecate import create_deprecated_class

from scrapy.exceptions import ScrapyDeprecationWarning

class Spider(object_ref):

"""Base class for scrapy spiders. All spiders must inherit from this

class.

"""

name = None

custom_settings = None

def __init__(self, name=None, **kwargs):

if name is not None:

self.name = name

elif not getattr(self, 'name', None):

raise ValueError("%s must have a name" % type(self).__name__)

self.__dict__.update(kwargs)

if not hasattr(self, 'start_urls'):

self.start_urls = []

@property

def logger(self):

logger = logging.getLogger(self.name)

return logging.LoggerAdapter(logger, {'spider': self})

def log(self, message, level=logging.DEBUG, **kw):

"""Log the given message at the given log level

This helper wraps a log call to the logger within the spider, but you

can use it directly (e.g. Spider.logger.info('msg')) or use any other

Python logger too.

"""

self.logger.log(level, message, **kw)

@classmethod

def from_crawler(cls, crawler, *args, **kwargs):

spider = cls(*args, **kwargs)

spider._set_crawler(crawler)

return spider

def set_crawler(self, crawler):

warnings.warn("set_crawler is deprecated, instantiate and bound the "

"spider to this crawler with from_crawler method "

"instead.",

category=ScrapyDeprecationWarning, stacklevel=2)

assert not hasattr(self, 'crawler'), "Spider already bounded to a " \

"crawler"

self._set_crawler(crawler)

def _set_crawler(self, crawler):

self.crawler = crawler

self.settings = crawler.settings

crawler.signals.connect(self.close, signals.spider_closed)

def start_requests(self):

for url in self.start_urls:

yield self.make_requests_from_url(url)

def make_requests_from_url(self, url):

return Request(url, dont_filter=True)

def parse(self, response):

raise NotImplementedError

@classmethod

def update_settings(cls, settings):

settings.setdict(cls.custom_settings or {}, priority='spider')

@classmethod

def handles_request(cls, request):

return url_is_from_spider(request.url, cls)

@staticmethod

def close(spider, reason):

closed = getattr(spider, 'closed', None)

if callable(closed):

return closed(reason)

def __str__(self):

return "<%s %r at 0x%0x>" % (type(self).__name__, self.name, id(self))

__repr__ = __str__